Optimal Policies for MDPs (Optimal Policies for MDPs)

Revision as of 11:24, 15 February 2023 by Admin (talk | contribs) (Created page with "{{DISPLAYTITLE:Optimal Policies for MDPs (Optimal Policies for MDPs)}} == Description == In an MDP, a policy is a choice of what action to choose at each state An Optimal Policy is a policy where you are always choosing the action that maximizes the “return”/”utility” of the current state. The problem here is to find such an optimal policy from a given MDP. == Parameters == No parameters found. == Table of Algorithms == {| class="wikitable sortable" styl...")

Description

In an MDP, a policy is a choice of what action to choose at each state An Optimal Policy is a policy where you are always choosing the action that maximizes the “return”/”utility” of the current state. The problem here is to find such an optimal policy from a given MDP.

Parameters

No parameters found.

Table of Algorithms

| Name | Year | Time | Space | Approximation Factor | Model | Reference |

|---|---|---|---|---|---|---|

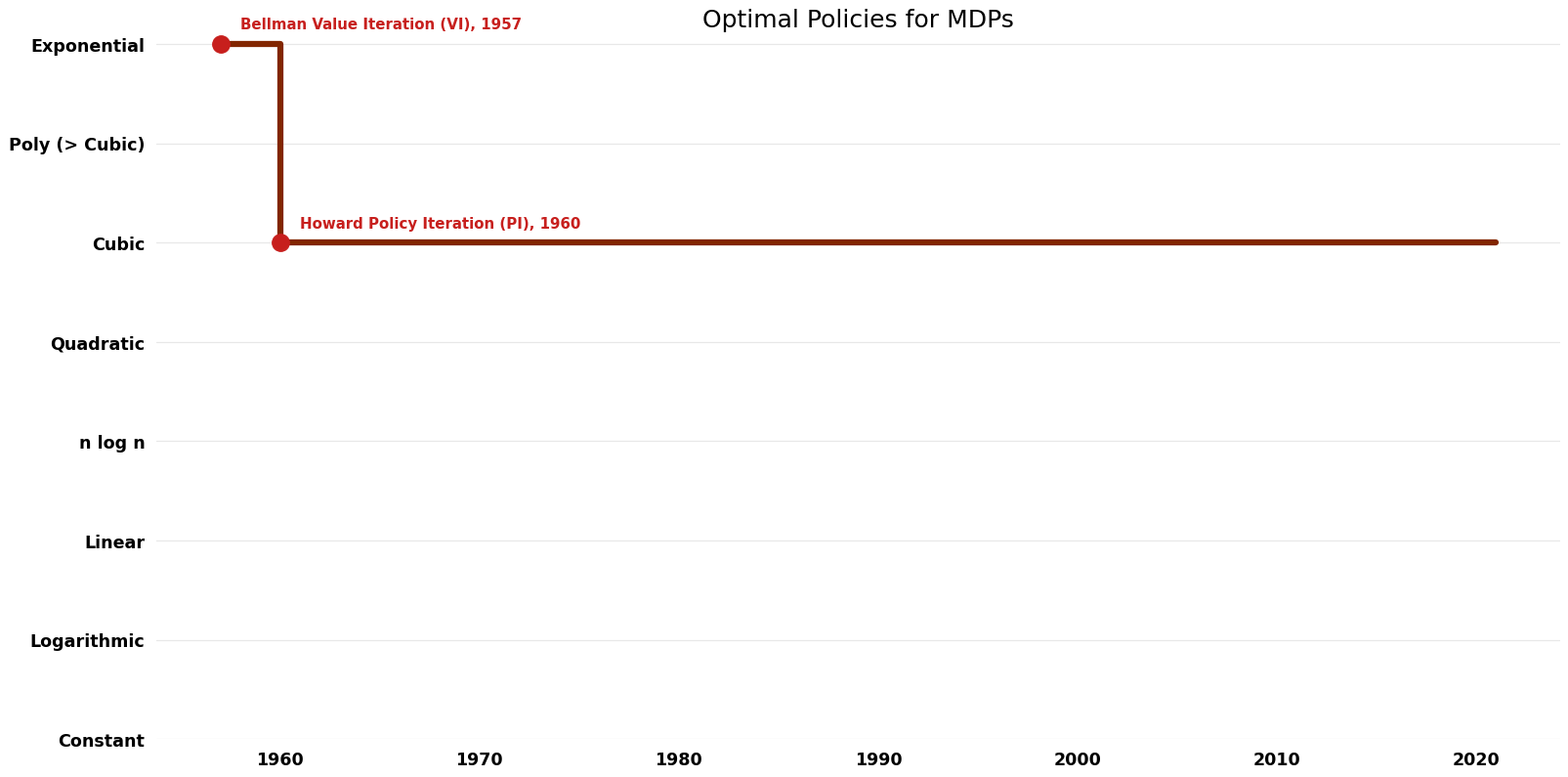

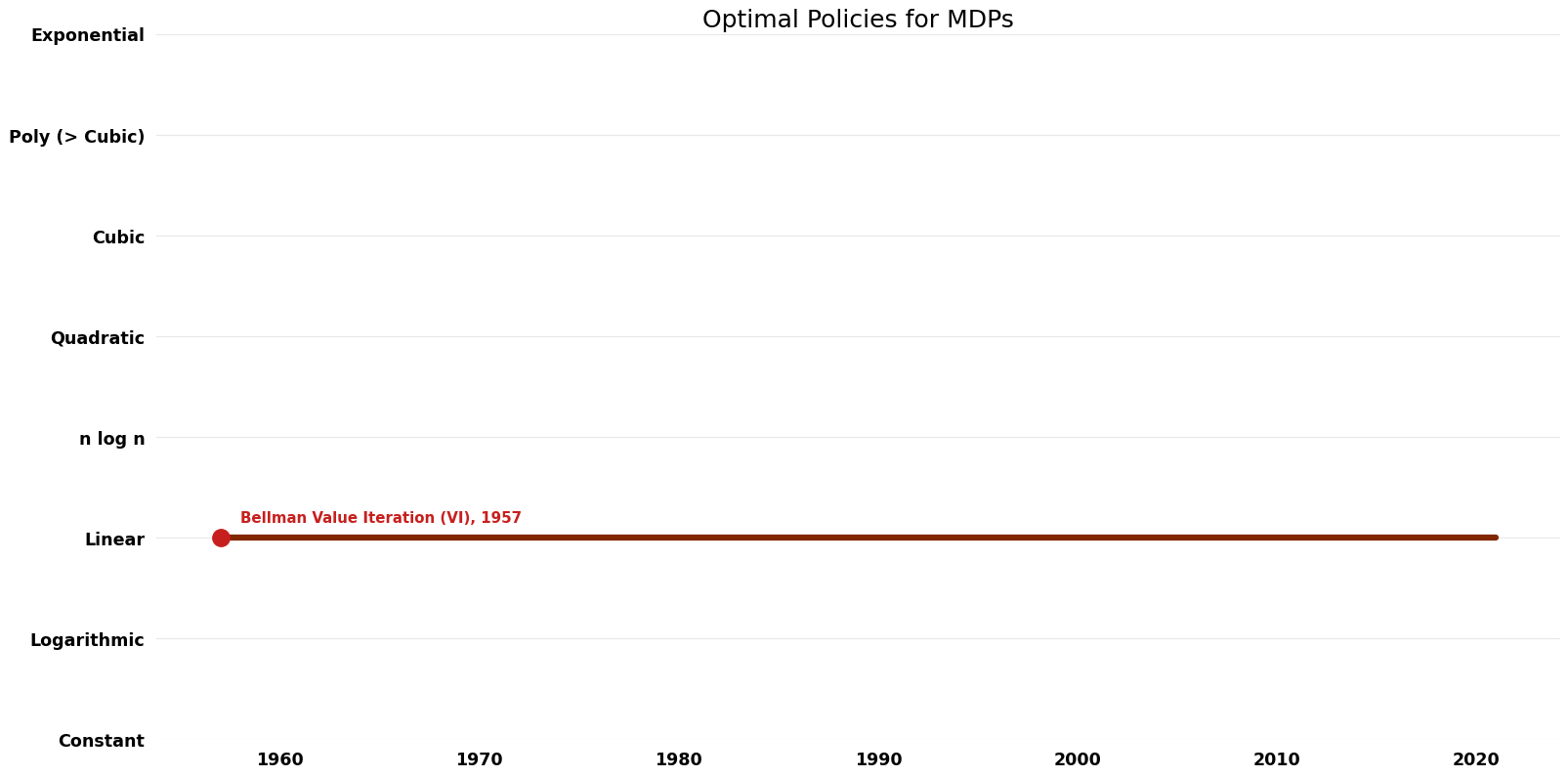

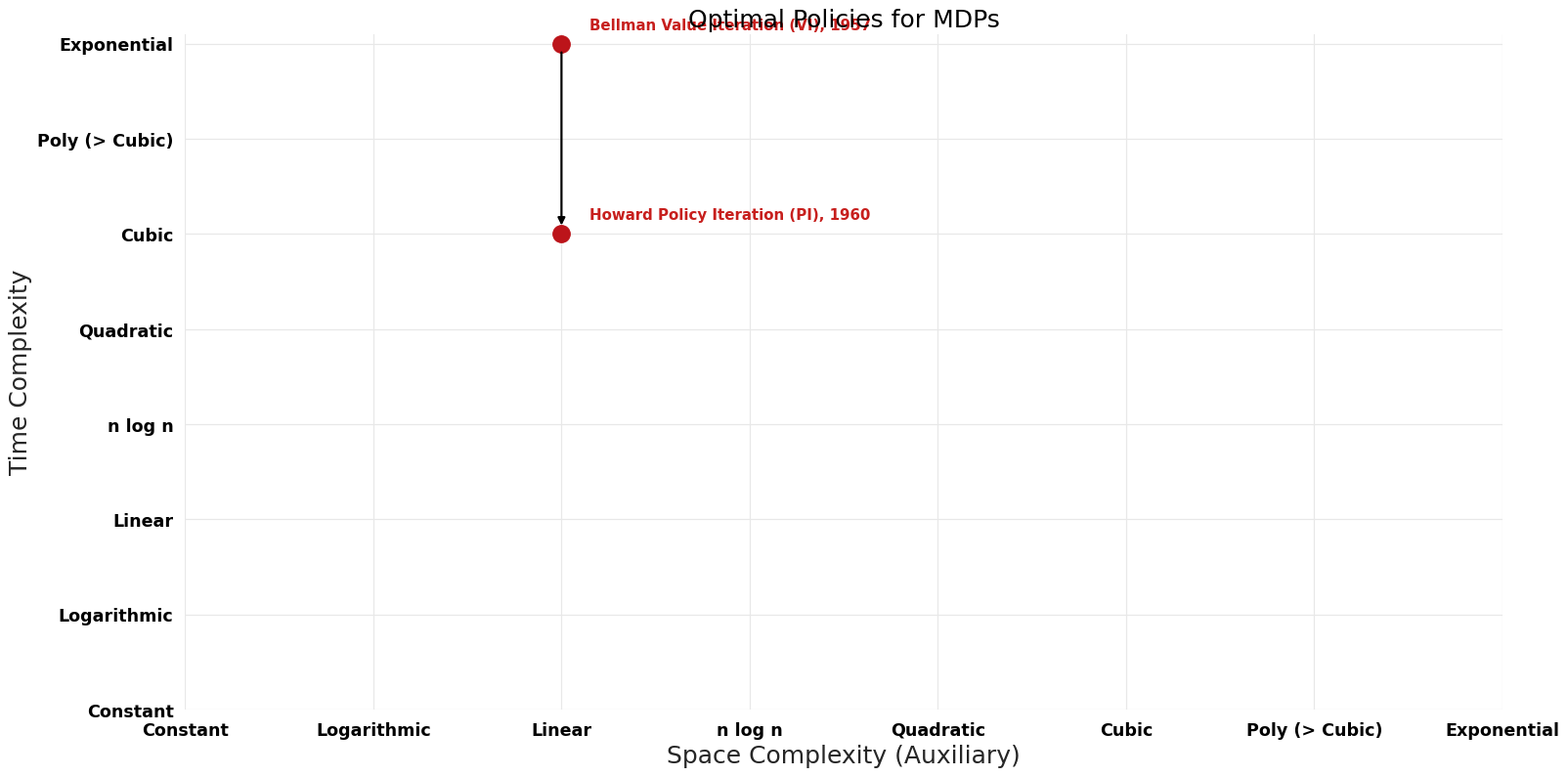

| Bellman Value Iteration (VI) | 1957 | $O({2}^n)$ | $O(n)$ | Exact | Deterministic | Time |

| Howard Policy Iteration (PI) | 1960 | $O(n^{3})$ | $O(n)$ | Exact | Deterministic | Time |

| Puterman Modified Policy Iteration (MPI) | 1974 | $O(n^{3})$ | $O(n)$ | Exact | Deterministic |